Today’s links

- Three more AI psychoses: Everybody calm down.

- Hey look at this: Delights to delectate.

- Object permanence: “Jules, Penny and the Rooster”; Superinjunction; Harper Lee’s kids v cheap paperbacks; 3D printed cat battle-armor; Black sf.

- Upcoming appearances: Where to find me.

- Recent appearances: Where I’ve been.

- Latest books: You keep readin’ em, I’ll keep writin’ ’em.

- Upcoming books: Like I said, I’ll keep writin’ ’em.

- Colophon: All the rest.

Three more AI psychoses (permalink)

“AI psychosis” is one of those terms that is incredibly useful and also almost certainly going to be deprecated in smart circles in short order because it is: a) useful; b) easily colloquialized to describe related phenomena; and c) adjacent to medical issues, and there’s a group of people who feel very strongly any metaphor that implicates human health is intrinsically stigmatizing and must be replaced with an awkward, lengthy phrase that no one can remember and only insiders understand.

So while we still can, let us revel in this useful term to talk about some very real pathologies in our world.

Formally, “AI psychosis” describes people who have delusions that are possibly induced, and definitely reinforced and magnified, by a chatbot. AI psychosis is clearly alarming for people whose loved ones fall prey to it, and it has been the subject of much press and popular attention, especially in the extreme cases where it has resulted in injury or death.

It’s possible for AI psychosis to be both a new and alarming phenomenon and also to be on a continuum with existing phenomena. Paranoid delusions aren’t new, of course. Take “Morgellons Disease,” a psychosomatic belief that you have wires growing in your body, which causes sufferers to pick at their skin to the point of creating suppurating wounds. Morgellons emerged in the 2000s, but the name refers to a 17th-century case-report of a patient who suffered from a similar delusion:

https://en.wikipedia.org/wiki/A_Letter_to_a_Friend

Morgellons is both a 400 year old phenomenon and an internet pathology. How can that be? Because the internet makes it easier for people with sparsely distributed traits to locate one another, which is why the internet era is characterized by the coherence of people with formerly fringe characteristics into organized blocs, for better (gender minorities, #MeToo) and worse (Nazis).

Morgellons is rare, but if you suffer from it, it’s easy for you to locate virtually every other person in the world with the same delusion and for all of you to reinforce and egg on your delusional beliefs.

Morgellons isn’t the only delusion that the internet reinforces, of course. “Gang stalking delusion” is a belief in a shadowy gang of sadistic tormentors who sneak hidden messages into song lyrics and public signage and innuendo in overheard snatches of other people’s conversations. It is an incredibly damaging delusion that ruins people’s lives.

Gang stalking delusion isn’t new, either – as with Morgellons, there are historical accounts of it going back centuries. But the internet supercharged gang stalking delusion by making it easy for GSD sufferers to find one another and reinforce one another’s beliefs, helping each other spin elaborate explanations for why the relatives, therapists, and friends who try to help them are actually in on the conspiracy. The result is that GSD sufferers end up ever more isolated from people who are trying mightily to save them, and more connected to people who drive them to self-harm.

Enter chatbots. Ready access to eager-to-please LLMs at every hour of the day or night means that you don’t even have to find a forum full of people with the same delusion as you, nor do you have to wait for a reply to your anguished message. The LLM is always there, ready to fire back a “yes-and” improv-style response that drives you deeper and deeper into delusion:

https://pluralistic.net/2025/09/17/automating-gang-stalking-delusion/

It’s possible that there are delusions that are even more rare than GSD or Morgellons that AI is surfacing. Imagine if you were prone to fleeting delusional beliefs (and whomst amongst us hasn’t experienced the bedrock certainty that we put something down right here, only to find it somewhere else and not have any idea how that happened?). Under normal circumstances, these cognitive misfires might be fleeting moments of discomfort, quickly forgotten. But if you are already habituated to asking a chatbot to explain things you don’t understand, it might well yes-and you into an internally consistent, entirely wrong belief – that is, a delusion.

Think of how often you noticed “42” after reading Hitchhiker’s Guide to the Galaxy, or how many times “6-7” crops up once you’ve experienced a baseline of exposure to adolescents. Now imagine that an obsequious tale-spinner was sitting at your elbow, helpfully noting these coincidences and fitting them into a folie-a-deux mystery play that projected a grand, paranoid narrative onto the world. Every bit of confirming evidence is lovingly cataloged, all disconfirming evidence is discounted or ignored. It’s fully automated luxury QAnon – a self-baking conspiracy that harnesses an AI in service to driving you deeper and deeper into madness:

That’s the original “AI psychosis” that the term was coined to describe. As Sam Cole notes in her excellent “How to Talk to Someone Experiencing ‘AI Psychosis,’” mental health practitioners are not entirely comfortable with the “psychosis” label:

https://www.404media.co/ai-psychosis-help-gemini-chatgpt-claude-chatbot-delusions/

“Psychosis” here is best understood as an analogy, not a diagnosis, and, as already noted, there is a large cohort of very persistent people who make it their business to eradicate analogies that make reference to medical or health-related phenomena. But these analogies are very hard to kill, because they do useful work in connecting unfamiliar, novel phenomena with things we already understand.

It’s true that these analogies can be stigmatizing, but they needn’t be. As someone with an autoimmune disorder, I am not bothered by people who describe ICE as an autoimmune disorder in which antibodies attack the host, threatening its very life. I am capable of understanding “autoimmune disorder” as referring to both a literal, medical phenomenon; and a figurative, political one. I have never found myself confusing one for the other.

“AI psychosis” is one of those very useful analogies, and you can tell, because “AI psychosis” has found even more metaphorical uses, describing other bad beliefs about AI. Today, I want to talk about three of these AI psychoses, and how they relate to one another: the investor AI delusion, the boss AI delusion, and the critic AI delusion.

Let’s start with the investors’ delusion. AI started as an investment project from the usual suspects: venture capitalists, private wealth funds, and tech monopolists with large cash reserves and ready access to loans during the cheap credit bubble. These entities are accustomed to making large, long-shot bets, and they were extremely motivated to find new markets to grow into and take over.

Growing companies need to keep growing, but not because they have “the ideology of a tumor.” Growing companies’ imperative to keep growing isn’t ideological at all – it’s material. Growth companies’ stock trade at a high multiple of their “price to earnings ratio” (PE ratio), which means that they can use their stock like money when buying other companies and hiring key employees.

But once those companies’ growth slows down, investors revalue those shares at a much lower PE multiplier, which makes individual executives at the company (who are primarily paid in stock) personally much poorer, prompting their departure, while simultaneously kneecapping the company’s ability to grow through acquisition and hiring, because a company with a falling share price has to buy things with cash, not stock. Companies can make more of their own stock on demand, simply by typing zeroes into a spreadsheet – but they can only get cash by convincing a customer, creditor or investor to part with some of their own:

https://pluralistic.net/2025/03/06/privacy-last/#exceptionally-american

Tech companies have absurdly large market shares – think of Google’s 90% search dominance – and so they’ve spent 15+ years coming up with increasingly absurd gambits to convince investors that they will continue to grow by capturing other markets. At first, these companies claimed that they were on the verge of eating one another’s lunches (Google would destroy Facebook with G+; Facebook would do the same to Youtube with the “pivot to video”).

This has a real advantage in that one need not speculate about the potential value of Facebook’s market – you only have to look at Facebook’s quarterly reports. But the downside is that Facebook has its own ideas about whether Google is going to absorb its market, and they are prone to forcefully make the case that this won’t happen.

After a few tumultuous years, tech giants switched to promoting growth via speculative new markets – metaverse, web3, crypto, blockchain, etc. Speculative new markets are speculative, and the weakness of that is that no one can say how big those markets might be. But that’s also the strength of those markets, because if no one can say how big those markets might be, then who’s to say that they won’t be very big indeed?

There’s a different advantage to confining your concerns to imaginary things: imaginary things don’t exist, so they don’t contest your public statements about them, nor do they make demands on you. Think of how the right concerns itself with imaginary children (unborn babies, children in Wayfair furniture; children in nonexistent pizza parlor basements, children undergoing gender confirmation surgery). These are very convenient children to advocate for, since, unlike real children (hungry children, children killed in the Gaza genocide, children whose parents have been kidnapped by ICE, children whom Matt Goetz and Donald Trump trafficked for sex, children in cages at the US border, trans kids driven to self-harm and suicide after being denied care), nonexistent children don’t want anything from you and they never make public pronouncements about whether you have their best interests at heart.

But as the AI project has required larger and larger sums to keep the wheels spinning, the usual suspects have started to run out of money, and now AI hustlers are increasingly looking to tap public markets for capital. They want you to invest your pension savings in their growth narrative machine, and they’re relying on the fact that you don’t understand the technology to trick you into handing over your money.

There’s a name for this: it’s called the “Byzantine premium” – that’s the premium that an investment opportunity attracts by being so complicated and weird that investors don’t understand it, making them easy to trick:

https://pluralistic.net/2022/03/13/the-byzantine-premium/

AI is a terrible economic phenomenon. It has lost more money than any other project in human history – $600-700b and counting, with trillions more demanded by the likes of OpenAI’s Sam Altman. AI’s core assets – data centers and GPUs – last 2-3 years, though AI bosses insist on depreciating them over five years, which is unequivocal accounting fraud, a way to obscure the losses the companies are incurring. But it doesn’t actually matter whether the assets need to be replaced every two years, every three years, or every five years, because all the AI companies combined are claiming no more than $60b/year in revenue (that number is grossly inflated). You can’t reach the $700b break-even point at $60b/year in two years, three years, or five years.

Now, some exceptionally valuable technologies have attained profitability after an extraordinarily long period in which they lost money, like the web itself. But these turnaround stories all share a common trait: they had good “unit economics. Every new web user reduced the amount of money the web industry was losing. Every time a user logged onto the web, they made the industry more profitable. Every generation of web technology was more profitable than the last.

Contrast this with AI: every user – paid or unpaid – that an AI company signs up costs them money. Every time that user logs into a chatbot or enters a prompt, the company loses more money. The more a user uses an AI product, the more money that product loses. And each generation of AI tech loses more money than the generation that preceded it.

To make AI look like a good investment, AI bosses and their pitchmen have to come up with a story that somehow addresses this phenomenon. Part of that story relies on the Byzantine premium: “Sure, you don’t understand AI, but why would all these smart people commit hundreds of billions of dollars to AI if they weren’t confident that they would make a lot of money from it?” In other words, “A pile of shit this big must have a pony underneath it somewhere!”

This is a great narrative trick, because it turns losing money into a virtue. If you’ve convinced a mark that the upside of the project is a multiple of the capital committed to it, then the more money you’re losing, the better the investment seems.

So this is the first AI psychosis: the idea that we should bet the world’s economy on these highly combustible GPUs and data centers with terrible unit economics and no path to break-even, much less profitability.

Investors’ AI psychosis is cross-fertilized by our second form of AI psychosis, which is the bosses’ AI psychosis: bosses’ bottomless passion for firing workers and replacing them with automation.

Bosses are easy marks for anything that lets them fire workers. After all, the ideal firm is one that charges infinity for its outputs (hence the market’s passion for monopolies) and pays nothing for its inputs (e.g. “academic publishing”).

This means that the fact that a chatbot can’t do your job isn’t nearly as important as the fact that an AI salesman can convince your boss to fire you and replace you with a chatbot that can’t do your job. Bosses keep replacing humans with defective chatbots, with catastrophic consequences, like Amazon’s cloud service crashing:

Bosses are haunted by the ego-shattering knowledge that they aren’t in the driver’s seat: if the boss doesn’t show up for work, everything continues to operate just fine. If the workers all stay home, the business grinds to a halt. In their secret hearts, bosses know that they’re not in the driver’s seat – they’re in the back seat, playing with a Fisher Price steering wheel. AI dangles the possibility of wiring that toy steering wheel directly into the drive-train, so that the company’s products go directly from the boss’s imagination to the public without the boss having to ask people who know how to do things to execute their cockamamie schemes:

https://pluralistic.net/2026/01/05/fisher-price-steering-wheel/#billionaire-solipsism

This is a powerfully erotic proposition for bosses, the realization of the libidinal fantasy in which sky-high CEO salaries can be justified by the fact that everything that happens in the company is truly, directly attributable to the boss. Like the delusional person who can be led deeper and deeper into a fantasy world by a chatbot, a boss’s delusion that they are worth thousands of times more than their workers makes them easy prey for a chatbot salesman that pushes them deeper and deeper into that delusion, until they bet the whole company on it.

Now we come to the third and final novel AI psychosis, the critics’ psychosis, that AI is an abnormally terrible technology. This is a species of “criti-hype,” which is when critics repeat the hyped-up claims of the companies they’re targeting, but as criticism (think of all the people who believed and uncritically amplified the ad-tech industry’s self-serving claims of being able to control our minds by “hacking our dopamine loops”):

https://peoples-things.ghost.io/youre-doing-it-wrong-notes-on-criticism-and-technology-hype/

AI is a normal technology. The people who made it, and the circumstances under which it was made, are normal. Its uses and abuses are normal. That doesn’t make it good, but it does make it unexceptional:

https://www.normaltech.ai/p/a-guide-to-understanding-ai-as-normal

The exceptional part of AI isn’t the technology, it’s the bubble. There’s nothing about AI per se that makes it exceptionally prone to devouring our natural resources, or endangering our jobs, or abetting war crimes. That’s all because of the bubble, and the bubble relies on the idea that AI is exceptional, not normal. Repeating and amplifying claims about AI’s exceptionalism helps the AI companies, because they rely on exceptionalism to keep the capital flowing and the bubble inflating.

AI is a normal technology. It’s normal for a technology to be invented by unlikable and immoral people and institutions. Not every technology is invented by a shitty person, but shitty people and institutions are well represented (and possibly disproportionately represented) in the history of technology. Charles Babbage invented the idea of general purpose computers as a way of improving labor control on slave plantations:

https://logicmag.io/supa-dupa-skies/origin-stories-plantations-computers-and-industrial-control/

Ada Lovelace wasn’t interested in making slavery more efficient, but neither was she driven by pure scientific inquiry. She invented programming to help her bet on the horses (it didn’t work):

https://en.wikipedia.org/wiki/Ada_Lovelace

The silicon transistor was co-invented by William Shockley, one of history’s great pieces of shit, a eugenicist who was committed to exterminating all non-white people that he never managed to ship a commercial product:

https://pluralistic.net/2021/10/24/the-traitorous-eight-and-the-battle-of-germanium-valley/

IBM built the tabulators for Auschwitz. HP were the Pentagon’s go-to contractors for any tech project that was so dirty no one else would touch it. We only got Unix because Bell Labs committed so many antitrust violations that they weren’t allowed to productize it themselves.

It’s not exceptional for AI companies to have terrible, piece-of-shit founders. It’s not exceptional for these companies to participate in war crimes. It’s not exceptional for these founders to want to pauperize workers. It’s not exceptional for these companies to lie about their products, bankrupt naive investors through stock swindles, and pitch themselves to investors as a way for capital to win the class war.

None of this means that AI companies are good, it just means that they are not exceptional. And because they aren’t exceptional, the same dynamics that govern other technologies apply to AI companies’ products. Their utility is a function of what they do, not who made them or how they were sold. The utility of AI products is based on whether people find ways to use them that make them happy – not whether the people who made those technologies are good people, or whether the funding for the technology was fraudulent, or whether other people use the technology to harm others.

Automation comes in two flavors: there’s automation that produces things more quickly (and hence more cheaply), and there’s automation that makes better things. Generally, capital prefers to use automation to increase the pace at which things are made, while workers prefer to use automation to improve the quality of the things they make.

Think of a hobbyist who pines for an automated soldering machine. That hobbyist longs to make board-level repairs and modifications that require precision that humans struggle to match. The hobbyist is a centaur, using a machine to help achieve human goals.

Now think of a factory owner who invests in an assembly line of the same machines: that boss wants to fire a bunch of workers and make the survivors of the purge take up the slack. The boss want to achieve corporate goals, to “sweat the assets,” making maximum use of the soldering machines. The pace at which the line runs is set to be the maximum that the workers can match. The workers on the line are “reverse centaurs” – humans who are pressed into service as peripherals for machines, at a pace that is constantly at the very limit of their endurance.

Reverse centaurs are trapped in capital’s automation plan – to make everything faster and cheaper. But that’s the result of bosses. It’s not the result of technology.

This is not to say that technology is apolitical. Only a fool would imagine that there are no politics embedded in technology. But you’d be a far greater fool if you asserted that the politics of a technology were simple, clear, and immutable.

Nor is this to say that when workers get to decide when and how to use technology, we will always make wise decisions. Perhaps the hobbyist who opts for an automated soldering machine will lose out on the opportunity to refine their hand-eye coordination in ways that will have many other benefits to their practice.

Or perhaps attempting to improve their hand-eye coordination to that point will wreck so many projects that they grow discouraged and give up altogether. Others’ choices that seem unwise to you might have perfectly good explanations that aren’t visible from your perspective. Ultimately, the world is a better place where workers get to decide which parts of their jobs they want to automate and which parts they want to lean into.

This is an extremely normal technological situation: for a new technology to be promoted and productized by shitty people who have grandiose goals that would be apocalyptic should they ever come to pass – and for some people to find uses of that technology that are nevertheless beneficial to them and their communities.

The belief that AI is an exceptionally bad technology (as opposed to an exceptionally bad economic bubble) drives AI critics into their own absurd culs-de-sac.

There are many, many skilled and reliable practitioners of technical and creative trades who’ve found extremely reasonable, normal ways in which AI has automated some part of their job. They aren’t hyperventilating about how AI has changed everything forever and the world is about to end. They’re not mistaking AI for god, or a therapist.

They’re just treating AI like a normal technology, like a plugin. Programmers’ tools have acquired useful automation plugins at regular intervals for decades – syntax checkers, advanced debuggers, automated wireframe utilities. For many programmers – including several of my acquaintance, whom I know to be both thoughtful and skilled – AI is another plugin, one they find useful enough to be modestly enthusiastic about.

It is nuts to deny the experiences these people are having. They’re not vibe-coding mission-critical AWS modules. They’re not generating tech debt at scale:

https://pluralistic.net/2026/01/06/1000x-liability/#graceful-failure-modes

They’re just adding another automation tool to a highly automated practice, and using it when it makes sense. Perhaps they won’t always choose wisely, but that’s normal too. There’s plenty of ways that pre-AI automation tools for software development led programmers astray. A skilled, centaur-configured programmer learns from experience which automation tools they should trust, and under which circumstances, and guides themselves accordingly.

It’s only the belief that AI is exceptional – exceptionally wicked, but exceptional nevertheless – that leads critics to decide that they are a better judge of whether a skilled worker should or should not use certain automation tools, and to make that judgment not based on the quality of the work in question, but on the moral character of the tool itself.

AI is just normal. The bubble is what drives the environmental costs. If the only LLMs were a couple big data-centers at Sandia National Labs, no one would be particularly exercised about the water and energy demands they represented. Big scientific endeavors – from NASA launches to the large Hadron Collider – often come with immense material and energy needs. The bubble causes massive, wasteful, duplicative efforts that chase diminishing returns through farcical scale.

Nor are AI bros exceptional. The stock swindlers who’ve blown $700b (and counting) on AI aren’t cyber-Svengalis with the power to cloud investors’ minds. They’re just running the same con that tech has been running ever since its returns started to taper off and survival became a matter of ginning up enthusiasm for speculative new ventures.

That doesn’t mean those people aren’t awful shits. Fuck those people. It just means that they’re normal awful shits. We don’t have to burnish their reputations by elevating them to the status of archdemons who taint everything they touch with unwashable sin. Sam Altman isn’t Lex Luthor. He’s just a conman:

The fact that these bros are just normal assholes means that we don’t have to treat everything they do as a sin. Scraping the entirety of human knowledge to make something new out of it isn’t “stealing.” Depending on why you’re doing it, it can be archiving, or making a search engine:

https://pluralistic.net/2023/09/17/how-to-think-about-scraping/

Too many AI critics have started from the undeniable fact that these guys are odious creeps who boast about wanting to ruin the lives of workers and then worked backwards to find the sin. The sin isn’t performing mathematical analysis on all the books ever written. That’s actually kind of awesome. It’s the kind of thing Aaron Swartz used to do – like when he ingested every law review article ever published and used it to trace the way that oil companies’ donations to law schools resulted in profs writing articles about why Big Oil can’t be held liable for trashing the planet:

AI bros’ sin isn’t making copies of published works. Hammering servers with badly behaved crawlers is a dick move and fuck them for doing it. But if these jerks made well-behaved scrapers that placed no abnormal demand on servers, it’s not like their critics would say, “Oh, I guess it’s fine, then.”

AI bros’ sin is running an economy-destroying, planet-wrecking stock swindle whose raison d’etre is pauperizing every worker and transferring 100% of the dying world’s wealth to a small cadre of morbidly wealthy, eminently guillotineable plutes. Making plugins? That’s not exceptional. It’s just normal.

The fact that something is normal doesn’t make it good. There’s a lot of normal things that I’d like to throw into the Sun. But we don’t do ourselves any favors when we amplify our enemies’ self-aggrandizing narratives by accusing them of being exceptional, even when we mean “exceptionally evil.” They’re normal assholes.

Fuck ’em.

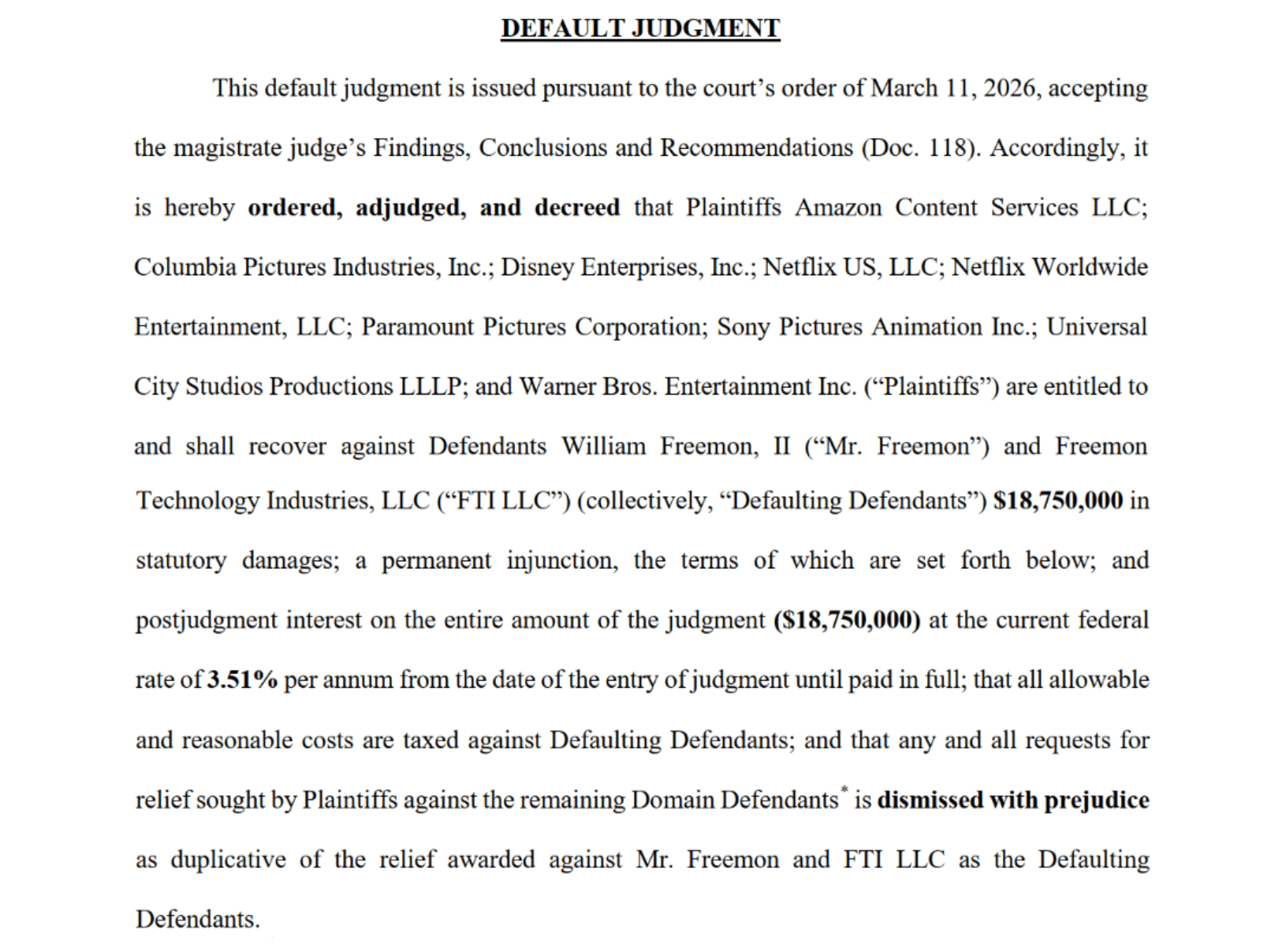

(Image: ZeptoBars, CC BY 3.0, modified)

Hey look at this (permalink)

- E is for…. Enshittification https://www.evanshunt.com/enshittification/

-

Calicornication: Postcards of Giant Produce (1909) https://publicdomainreview.org/collection/giant-produce-postcards/

-

Organized Money: Why Your Lamp Sucks https://prospect.org/2026/03/11/organized-money-lamps-lighting-mid-century-modeline-history/

-

The Live Nation settlement has industry insiders baffled https://www.theverge.com/policy/893272/live-nation-ticketmaster-doj-settlement-states

-

Public speakerphone use is officially out of control https://arstechnica.com/culture/2026/03/explain-it-like-im-5-why-is-everyone-on-speakerphone-in-public/

Object permanence (permalink)

#15yrsago Notorious financier gets a “super-injunction” prohibiting the press from revealing that he is a banker https://www.telegraph.co.uk/finance/newsbysector/banksandfinance/8373535/Sir-Fred-Goodwin-former-RBS-chief-obtains-super-injunction.html

#10yrsago Shortly after her death, Harper Lee’s heirs kill cheap paperback edition of To Kill a Mockingbird https://newrepublic.com/article/131400/mass-market-edition-kill-mockingbird-dead

#10yrsago Web security company breached, client list (including KKK) dumped, hackers mock inept security https://arstechnica.com/information-technology/2016/03/after-an-easy-breach-hackers-leave-tips-when-running-a-security-company/

#10yrsago Microsoft spams corporate users with messages denigrating their IT departments https://web.archive.org/web/20160309195537/https://www.infoworld.com/article/3042397/microsoft-windows/admins-beware-domain-attached-pcs-are-sprouting-get-windows-10-ads.html

#10yrsago Cycle and Recycle: gorgeous photos of the European recycling process https://www.wired.com/2016/03/paul-bulteel-cycle-recyle-europe-recycles-tons-of-waste-and-its-pretty-gorgeous/

#10yrsago Fellowships for “Robin Hood” hackers to help poor people get access to the law https://web.archive.org/web/20160304221459/https://labs.robinhood.org/fellowship/

#10yrsago 3D printed battle-armor for cats https://web.archive.org/web/20160311224139/http://sinkhacks.com/making-3d-printed-cat-armor/

#10yrsago Great moments in the history of black science fiction https://web.archive.org/web/20160308034421/http://www.fantasticstoriesoftheimagination.com/a-crash-course-in-the-history-of-black-science-fiction/

#1yrago Daniel Pinkwater’s “Jules, Penny and the Rooster” https://pluralistic.net/2025/03/11/klong-you-are-a-pickle-2/#martian-space-potato

Upcoming appearances (permalink)

- Barcelona: Enshittification with Simona Levi/Xnet (Llibreria Finestres), Mar 20

https://www.llibreriafinestres.com/evento/cory-doctorow/ -

Berkeley: Bioneers keynote, Mar 27

https://conference.bioneers.org/ -

Montreal: Bronfman Lecture (McGill) Apr 10

https://www.eventbrite.ca/e/artificial-intelligence-the-ultimate-disrupter-tickets-1982706623885 -

London: Resisting Big Tech Empires (LSBU)

https://www.tickettailor.com/events/globaljusticenow/2042691 -

Berlin: Re:publica, May 18-20

https://re-publica.com/de/news/rp26-sprecher-cory-doctorow -

Berlin: Enshittification at Otherland Books, May 19

https://www.otherland-berlin.de/de/event-details/cory-doctorow.html -

Hay-on-Wye: HowTheLightGetsIn, May 22-25

https://howthelightgetsin.org/festivals/hay/big-ideas-2

Recent appearances (permalink)

- Launch for Cindy’s Cohn’s “Privacy’s Defender” (City Lights)

https://www.youtube.com/watch?v=WuVCm2PUalU -

Chicken Mating Harnesses (This Week in Tech)

https://twit.tv/shows/this-week-in-tech/episodes/1074 -

The Virtual Jewel Box (U Utah)

https://tanner.utah.edu/podcast/enshittification-cory-doctorow-matthew-potolsky/ -

Tanner Humanities Lecture (U Utah)

https://www.youtube.com/watch?v=i6Yf1nSyekI -

The Lost Cause

https://streets.mn/2026/03/02/book-club-the-lost-cause/

Latest books (permalink)

- “Canny Valley”: A limited edition collection of the collages I create for Pluralistic, self-published, September 2025 https://pluralistic.net/2025/09/04/illustrious/#chairman-bruce

-

“Enshittification: Why Everything Suddenly Got Worse and What to Do About It,” Farrar, Straus, Giroux, October 7 2025

https://us.macmillan.com/books/9780374619329/enshittification/ -

“Picks and Shovels”: a sequel to “Red Team Blues,” about the heroic era of the PC, Tor Books (US), Head of Zeus (UK), February 2025 (https://us.macmillan.com/books/9781250865908/picksandshovels).

-

“The Bezzle”: a sequel to “Red Team Blues,” about prison-tech and other grifts, Tor Books (US), Head of Zeus (UK), February 2024 (thebezzle.org).

-

“The Lost Cause:” a solarpunk novel of hope in the climate emergency, Tor Books (US), Head of Zeus (UK), November 2023 (http://lost-cause.org).

-

“The Internet Con”: A nonfiction book about interoperability and Big Tech (Verso) September 2023 (http://seizethemeansofcomputation.org). Signed copies at Book Soup (https://www.booksoup.com/book/9781804291245).

-

“Red Team Blues”: “A grabby, compulsive thriller that will leave you knowing more about how the world works than you did before.” Tor Books http://redteamblues.com.

-

“Chokepoint Capitalism: How to Beat Big Tech, Tame Big Content, and Get Artists Paid, with Rebecca Giblin”, on how to unrig the markets for creative labor, Beacon Press/Scribe 2022 https://chokepointcapitalism.com

Upcoming books (permalink)

- “The Reverse-Centaur’s Guide to AI,” a short book about being a better AI critic, Farrar, Straus and Giroux, June 2026

-

“Enshittification, Why Everything Suddenly Got Worse and What to Do About It” (the graphic novel), Firstsecond, 2026

-

“The Post-American Internet,” a geopolitical sequel of sorts to Enshittification, Farrar, Straus and Giroux, 2027

-

“Unauthorized Bread”: a middle-grades graphic novel adapted from my novella about refugees, toasters and DRM, FirstSecond, 2027

-

“The Memex Method,” Farrar, Straus, Giroux, 2027

Colophon (permalink)

Today’s top sources:

Currently writing: “The Post-American Internet,” a sequel to “Enshittification,” about the better world the rest of us get to have now that Trump has torched America (1081 words today, 48461 total)

- “The Reverse Centaur’s Guide to AI,” a short book for Farrar, Straus and Giroux about being an effective AI critic. LEGAL REVIEW AND COPYEDIT COMPLETE.

-

“The Post-American Internet,” a short book about internet policy in the age of Trumpism. PLANNING.

-

A Little Brother short story about DIY insulin PLANNING

This work – excluding any serialized fiction – is licensed under a Creative Commons Attribution 4.0 license. That means you can use it any way you like, including commercially, provided that you attribute it to me, Cory Doctorow, and include a link to pluralistic.net.

https://creativecommons.org/licenses/by/4.0/

Quotations and images are not included in this license; they are included either under a limitation or exception to copyright, or on the basis of a separate license. Please exercise caution.

How to get Pluralistic:

Blog (no ads, tracking, or data-collection):

Newsletter (no ads, tracking, or data-collection):

https://pluralistic.net/plura-list

Mastodon (no ads, tracking, or data-collection):

Bluesky (no ads, possible tracking and data-collection):

https://bsky.app/profile/doctorow.pluralistic.net

Medium (no ads, paywalled):

https://doctorow.medium.com/

https://twitter.com/doctorow

Tumblr (mass-scale, unrestricted, third-party surveillance and advertising):

https://mostlysignssomeportents.tumblr.com/tagged/pluralistic

“When life gives you SARS, you make sarsaparilla” -Joey “Accordion Guy” DeVilla

READ CAREFULLY: By reading this, you agree, on behalf of your employer, to release me from all obligations and waivers arising from any and all NON-NEGOTIATED agreements, licenses, terms-of-service, shrinkwrap, clickwrap, browsewrap, confidentiality, non-disclosure, non-compete and acceptable use policies (“BOGUS AGREEMENTS”) that I have entered into with your employer, its partners, licensors, agents and assigns, in perpetuity, without prejudice to my ongoing rights and privileges. You further represent that you have the authority to release me from any BOGUS AGREEMENTS on behalf of your employer.

ISSN: 3066-764X